An Australian man has been unmasked as an influential player in a new frontier of the artificial intelligence industry that harvests images of real women, without their permission, to create virtual influencers to make money.

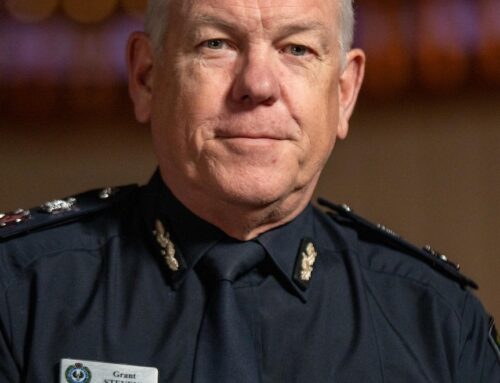

ABC Investigations has identified a north-east Adelaide man, Antonio Alvaro, as a member of an international network of these so-called “AI pimps” that is engaging in mass misuse of women’s images to help sell porn subscriptions.

Alvaro’s activities, which leading intellectual property lawyers describe as “absolutely reprehensible” and not legal, involve creating faces with AI and then transplanting them onto videos of women’s bodies sourced from social media accounts.

These creators then use the fake personas to encourage their followers to join subscription services like Fanvue, an OnlyFans-like platform where they can pay for pornographic images and videos of the AI model.

An example of this kind of image misappropriation can be seen in a recent post by singer Alta Sweet Tabar.

Tabar shared a Christmas-themed video with her followers that was taken by “Australian” AI porn star “Amy Marie Richards”.

The creator of the AI porn star then replaced Tabar’s face with the face of “Amy Marie Richards” and reposted the video to its Instagram account, which promotes its Fanvue page and an AI sex chat bot.

Tabar was reached for comment.

This kind of mass harvesting of content is being carried out by YouTubers and AI entrepreneurs instructing their millions of viewers to use other women’s photos and videos to grow the fake influencer market.

Australian model Robyn Lawley, who has been petitioning the federal government to urgently address AI-driven misappropriation of images, said the activity shown to her by ABC Investigations was “blatant theft”.

“It’s plain theft when they’re saying there’s someone else’s face … and they’re not saying who the original image is from and who the person is,” she said.

She said these creators should “get a real job”.

“Stop making money off our bodies.”

The hunt for Lazman, the porn ‘maestro’

Last year, Antonio Alvaro helped break new ground in the global virtual influencer industry after one of his AI models was featured on the cover of a print magazine.

He has since built his own AI porn empire and now operates some of the world’s most popular AI influencers including “Jenna Dreamz”, “Sarah Jordan”, and “Sophia Stiletto”.

But until now, Alvaro has operated anonymously.

ABC Investigations has tracked Alvaro’s digital footprints to unmask his real identity and traced how he used these fake models to sell AI-generated pornography on subscription-based websites.

Alvaro operated under the aliases “Lazman555”, “Cavv”, and “Shamman” and was part of a community creating modifications and incorporating sexual content for the video game Fallout 4.

Alvaro, using the alias Lazman555, was described as a “maestro” on online forums. His collection of titillating animations and skimpy outfits for the game has been downloaded more than 3 million times since 2016.

ABC Investigations has confirmed Alvaro, Lazman555, and the owner of the three AI models’ accounts — “Sarah Jordan”, “Jenna Dreamz” and “Sophia Stiletto” — are the same person.

A public PayPal account for Lazman555 led the ABC to a now-deleted YouTube channel that contained a dozen videos showing off his cassette decks and analogue audio equipment.

His rare vintage collection revealed another pseudonym Alvaro used on an online marketplace, where a user of a forum provided the ABC with Alvaro’s name, email, and residential address.

ABC Investigations then linked these details to several social media accounts for Alvaro that had been promoting and monetising fake influencer content.

How ‘Sarah Jordan’ became a superstar

One of Alvaro’s Fallout 4 models, called “Sarah Willington”, was built with rudimentary 3D animation software.

Last year, Alvaro turned “Willington” into a new influencer he called “Sarah Jordan”, which has since become one of the world’s most recognisable synthetic faces.

“Sarah Jordan” was the model that graced the May 2023 cover of men’s magazine Autobabes, where it was “interviewed” for its first “AI glamour model” issue, alongside “Dreamz” and “Stiletto”.

The editor of Autobabes, whom the ABC has agreed not to name, confirmed he worked with Alvaro for three weeks on the project in February last year. The ABC understands Alvaro now controlled all three AI influencers.

After “Sarah Jordan’s” magazine debut, Alvaro began building the social media following for his fake models by featuring videos and photos of them at fashion shoots, travelling the world, or lounging around in lingerie.

However, a closer look at the footage revealed Alvaro was repurposing videos of real women without their knowledge.

High-profile Sydney model Ella Cervetto is one of many women whose social media videos were used by Alvaro, who substituted the face of “Sarah Jordan” onto videos of her body.

Videos of Miami model Celine Farach were also used by Alvaro. She said AI creators had been taking her work without consent “for some time”.

“It’s out of my control, though,” she said, “I find it sad and dangerous for those who fall for it.”

ABC Investigations also found Alvaro had transposed the face of “Sarah Jordan”, which was designed as a Caucasian Australian, onto the bodies of Hispanic and Latin American models.

Teens used in AI pornstar profiles

“Sarah Jordan” is just one of dozens of AI influencers whose face is being used this way.

One of the most famous and profitable is “Emily Pellegrini”, which is reportedly earning its creator more than $10,000 a month.

Sydney-based model Ella Cervetto’s Instagram videos were repeatedly lifted by the creator of “Emily Pellegrini” through AI face swaps.

ABC Investigations also found the content for another Sydney model, Elise Faust, was used to build the profile of the fake influencer called “Amy Marie Richards”.

While many of the victims were celebrities and models, others were ordinary people without public profiles who had posted videos of themselves dancing or on holidays.

ABC Investigations found virtual influencer “Emilie Pavlova” had its face deepfaked into the video of a then 16-year-old make-up artist.

Another AI model, called “Jess Hale”, had lifted a TikTok dance video of a then-15-year-old singer. Several sexualised comments were left by “Jess Hale” fans on the reappropriated footage.

Both the creators of “Emilie Pavlova” and “Jess Hale” were also promoting the sale of porn on those accounts. Neither responded to ABC requests for comment.

How-to videos on AI influencers flood internet

A few years ago, crafting realistic deepfakes would have required proficiency in at least one programming language, as well as video and audio editing skills and a significant investment of time.

The rapid advancement of AI image generators, and their ease of usage, has changed this.

ABC Investigations has found hundreds of YouTube videos providing step-by-step tutorials on how to build and monetise AI influencers.

One instructional video that has been viewed more than 4 million times showcases ways to circumvent “unwanted safety layers” on popular image generators that prevent nudity and racial caricatures.

In it, the creator explains how these tools allow anybody to become an “AI Pimp” that can generate realistic-looking content to “trick sad, lonely men” or create pornographic images they wouldn’t have to pay for.

Other videos go further by providing explicit instructions on finding women’s social media accounts, taking their images to help build a fake model, and then monetising the fake influencer with porn.

One popular AI tutorial channel that has accrued millions of views walks through how to download and swap a woman’s TikTok video using a “one-click deepfake” tool.

Other videos from the same creator walked viewers through how to create explicit images from celebrity photos and provided advice on different ways to monetise these AI influencers.

These instructional videos were not just demonstrating how to target high-profile models and celebrities without consent.

Another creator showed in near real-time how to lift and face-swap his AI model “Amelia” onto videos he took from Irina Avdeeva, a Russian woman who has only 2,000 followers on Instagram, where she posts about her work in the motorcycle industry.

Avdeeva was not aware her image was being misused until contacted by ABC Investigations.

The AI version of Avdeeva, “Amelia”, sells T-shirts on Instagram. The same channel also has instructional videos on how to make money on Fanvue like “Emily Pellegrini”.

Fake images, real money

ABC Investigations has identified different creators of AI model accounts operating from France, Switzerland, Indonesia, the United States, and Thailand.

These accounts regularly promote each other or generate fake photos of their different AI influencers partying together.

Many of these accounts direct their followers to Fanvue, where “Emily Pellegrini”, the AI model that used Ella Cerveto’s videos without permission, is cited by the company as one of its most successful influencers.

On the “Emily Pellegrini” Fanvue account, many of its nude photos actually belonged to a Brazilian OnlyFans model but with a synthetic face transposed on top. The account also offers a 6-minute shower video for $US369 ($567).

A Fanvue spokesperson said the “Emily Pellegrini” account had “correct permissions” for its content on its platform and did not breach its terms.

“We operate in accordance with regulations, and we have a compliance team dedicated to monitoring both AI and real-life accounts to ensure they operate within the rules. Any that don’t are removed from the platform,” the spokesperson said.

“Emily Pellegrini” also has a “sister” account named “Fiona Pellegrini”, which continues to post AI face-swapped videos.

The creator did not respond to questions around its lifting of several videos, including from Ella Cervetto, without permission on its Instagram.

‘Legally speaking … that’s clear copyright infringement’

Almost none of the women whose Instagram images were lifted and who responded to ABC Investigations inquiries were aware their content had been used by AI creators.

University of Sydney law professor and intellectual property expert Kimberlee Weatherall said using images of a woman’s body to create AI influencers was “absolutely reprehensible” and these examples were clear breaches of people’s intellectual property rights.

“My first considered legal opinion is, ick,” Weatherall said, after reviewing the ABC’s findings.

“Legally speaking … if you’ve just lifted other people’s films and then reuse that or a substantial part of the film, that’s a pretty clear copyright infringement with no obvious exceptions that would apply.”

Her assessment was echoed by intellectual property barrister and lecturer Jane Rawlings, who added that many of these AI influencer creators, including Alvaro, could also be contravening consumer law by not clearly disclosing they were synthetic models.

Alvaro’s fake models’ social media accounts had not explicitly mentioned they were created with AI until he was contacted by ABC Investigations.

Alvaro declines ABC questions

Less than a day after ABC Investigations contacted Alvaro, most of the content on his social media accounts and promotional links were deleted.

Alvaro’s lawyer told ABC Investigations that he would not be responding to questions.

The revelations come at a time when governments and technology companies across the world are scrambling to curb non-consensual AI-generated pornography.

Noelle Martin, an expert on image abuse who was once the victim of deepfake porn, said this new frontier jeopardised the safety of every person who has had their image posted online.

“We hear about the implications of this kind of technology for democracy,” she said.

“But what I really worry about is how this impacts our ability to self-determine; to control our lives and our identities … what we say and do … if we do not have the capacity to self-determine, then everything falls apart because we can’t control anything.”

She said many women and girls would not have the platform or resources to debunk material being created of them.

“That is the scary part … when younger and younger people are targeted, we’re going to be living in this dystopian world.”

Watch the ABC investigation into “AI Pimps” on iView or YouTube.

Posted , updated